accelerate多卡并行启动就报错

(kdc) d25-lfz@MILab:/media/ubuntu/data/lfz/kdc/kuavo_data_challenge$ accelerate launch --config_file configs/accelerate/accelerate_config.yaml kuavo_train/train_policy_with_accelerate.py --config-name=act_config task=task1 method=act

/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/pydantic/_internal/_generate_schema.py:2249: UnsupportedFieldAttributeWarning: The 'repr' attribute with value False was provided to the `Field()` function, which has no effect in the context it was used. 'repr' is field-specific metadata, and can only be attached to a model field using `Annotated` metadata or by assignment. This may have happened because an `Annotated` type alias using the `type` statement was used, or if the `Field()` function was attached to a single member of a union type.

warnings.warn(

/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/pydantic/_internal/_generate_schema.py:2249: UnsupportedFieldAttributeWarning: The 'frozen' attribute with value True was provided to the `Field()` function, which has no effect in the context it was used. 'frozen' is field-specific metadata, and can only be attached to a model field using `Annotated` metadata or by assignment. This may have happened because an `Annotated` type alias using the `type` statement was used, or if the `Field()` function was attached to a single member of a union type.

warnings.warn(

W0304 17:25:11.423000 1022108 site-packages/torch/distributed/elastic/multiprocessing/api.py:900] Sending process 1022279 closing signal SIGTERM

W0304 17:25:11.424000 1022108 site-packages/torch/distributed/elastic/multiprocessing/api.py:900] Sending process 1022280 closing signal SIGTERM

E0304 17:25:11.839000 1022108 site-packages/torch/distributed/elastic/multiprocessing/api.py:874] failed (exitcode: -11) local_rank: 0 (pid: 1022277) of binary: /media/ubuntu/data/lfz/miniconda3/envs/kdc/bin/python3.10

Traceback (most recent call last):

File "/media/ubuntu/data/lfz/miniconda3/envs/kdc/bin/accelerate", line 6, in <module>

sys.exit(main())

File "/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/accelerate/commands/accelerate_cli.py", line 50, in main

args.func(args)

File "/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/accelerate/commands/launch.py", line 1226, in launch_command

multi_gpu_launcher(args)

File "/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/accelerate/commands/launch.py", line 853, in multi_gpu_launcher

distrib_run.run(args)

File "/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/torch/distributed/run.py", line 883, in run

elastic_launch(

File "/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/torch/distributed/launcher/api.py", line 139, in __call__

return launch_agent(self._config, self._entrypoint, list(args))

File "/media/ubuntu/data/lfz/miniconda3/envs/kdc/lib/python3.10/site-packages/torch/distributed/launcher/api.py", line 270, in launch_agent

raise ChildFailedError(

torch.distributed.elastic.multiprocessing.errors.ChildFailedError:

=========================================================

kuavo_train/train_policy_with_accelerate.py FAILED

---------------------------------------------------------

Failures:

[1]:

time : 2026-03-04_17:25:11

host : MILab

rank : 1 (local_rank: 1)

exitcode : -11 (pid: 1022278)

error_file: <N/A>

traceback : Signal 11 (SIGSEGV) received by PID 1022278

---------------------------------------------------------

Root Cause (first observed failure):

[0]:

time : 2026-03-04_17:25:11

host : MILab

rank : 0 (local_rank: 0)

exitcode : -11 (pid: 1022277)

error_file: <N/A>

traceback : Signal 11 (SIGSEGV) received by PID 1022277

=========================================================

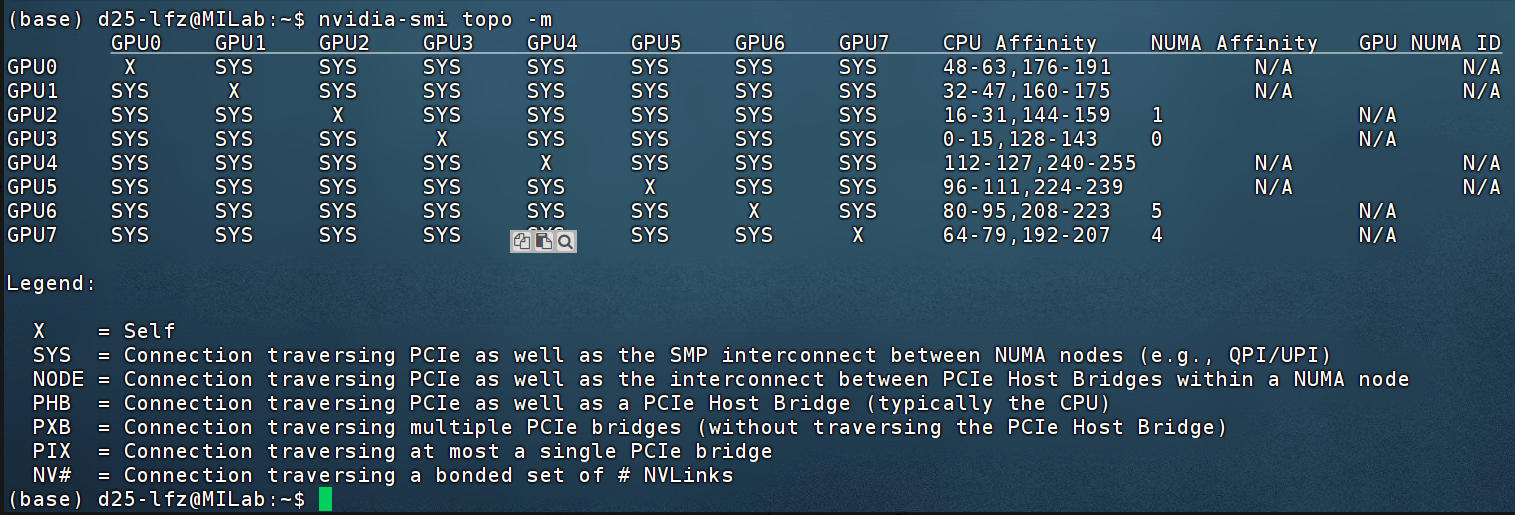

如图为拓扑,8*4090没有NVLink

accelerate_config.yaml

compute_environment: LOCAL_MACHINE

distributed_type: MULTI_GPU # Multi-GPU mode

fp16: true # Set according to your amp configuration

machine_rank: 0

main_process_ip: null

main_process_port: null

main_training_function: main

num_machines: 1

num_processes: 4 # 2 threads in this example (I.e. 2 GPU's)

gpu_ids: "4,5,6,7" # Which GPU ID's to be used for training

解决方案:

export NCCL_DEBUG=INFO

export NCCL_ASYNC_ERROR_HANDLING=1

export NCCL_SHM_DISABLE=1

export NCCL_P2P_DISABLE=1

export NCCL_IB_DISABLE=1